Generative AI – What Happens When The Novelty Wears Off?

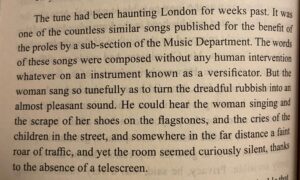

The “Versificator” from George Orwell’s “1984” – AI slop has been around a while!

This is the second of two connected articles. Part one focused on my own experience of generative AI, and questions around what makes creative work meaningful. In part two I’m looking outward and forward.

How have people tended to respond to new cultural and creative technologies over time? What might these patterns tell us about where generative AI is heading next, and what might this mean for creativity?

When the excitement dies down

After spending time experimenting with generative AI tools to make creative outputs like pictures, music or videos, it is tempting to leap straight to big conclusions. Some people see a creative revolution underway. Others see the end of art, as the machine replaces the human. Neither response feels particularly convincing. What I think is more accurate is something less dramatic and more familiar. People will play with this technology. They will be impressed. They will enjoy it for a while. And then, gradually, most of them will get bored.

This is not because the technology is trivial. In many cases the outputs from AI are technically convincing and sometimes astonishingly polished. But novelty has a limited lifespan, particularly when it arrives without effort. When creation becomes instantaneous, no matter how ‘good’ the result, it loses the friction generated by effort and working to shape one’s own expression with purpose.

For most people it isn’t interesting beyond the first few encounters. Once you’ve seen what the button does, pressing it again isn’t very exciting. The novelty wears off, and indifference sets in. I certainly won’t be using Suno – the Versificator of today – to create any music. I will be sticking to the old-fashioned way of doing things: making music by hand on a computer, using a DAW programme that’s been around in essentially the same form for years. There will be inevitable change, consolidation and disruption here too, but no one I know will be trading the ability to drill down into the finest details of personally making music tracks for any sort of one-click creation solution.

What usually happens next

We have seen this pattern repeatedly. New tools arrive wrapped in claims of transformation, democratisation or disruption. They spread quickly, generate headlines and attract speculative investment (not necessarily in that order). The initial excitement then fades. The tools that genuinely help people do what they already care about remain. The rest are absorbed into the background or quietly abandoned. Over time they become part of the general state of the art, often in ways that feel obvious in hindsight.

Music offers no shortage of examples. The synthesiser was once seen as an existential threat to musicianship. Sampling was supposed to destroy originality. Downloads were meant to kill the industry, and streaming was meant to bury it once and for all. In every instance things have adapted and evolved, folding once-revolutionary developments into common practice. Parts of the industry are now making more money than at any point in prior history. The core controversy today is not about whether the industry is dead or alive, rather it’s how the vast spoils are shared or hoarded, even as the norm is for anyone to be able to write, record and release music to the world in a few very easy steps.

More recently, we were told that blockchain would rescue the music industry. NFTs would rebuild creative economies, and new technical architectures would finally fix all the breaks and inefficiencies. None of these developments proved revolutionary – and certainly not terminal – as so few actually worked. Some changed certain working practices. Some shifted power. Some failed outright. A system can improve or replace another system. But a system, in and of itself, cannot deal with human issues like motivation, laziness, self-interest and plain old human error. For this reason a noticeably high proportion of technical solutions which promised to be silver bullets to revolutionise the music industry have failed.

Entertainment isn’t labour

So much for industry systems. None of these industry advances removed the basic distinction between people who want to make things, and people who simply want to experience (or “consume”) them. So what about creativity?

Generative AI fits into the same historical pattern. Most people will engage with it as entertainment rather than as a creative discipline. They will enjoy making novelty songs, images or texts for amusement or curiosity. For a small minority, this will spark deeper interest. For the vast majority, it will not. Culture at scale has always worked this way. Most people do not want to work for their entertainment. They want something immediate, engaging and easy to discard. That is not a criticism. It is simply how mass culture functions.

This helps explain why attempts to market generative AI to a broad audience often default to gimmicks. Recent advertising for AI music tools frames them as ways to generate personalised songs as gifts or jokes. AI image and video generators can come out with better results than most non-experts can create themselves, but at a basic level. This reflects an understanding that the mainstream appeal of generative creativity lies to some extent in novelty, not mastery. If it’s good enough to do a simple job, great. Otherwise you will still need an expert to help you. The technology underneath may be extraordinary, but everyday demand rarely runs very deep.

A lesson from the inside

I learned this lesson long before AI entered the conversation. When I first worked at Capital Radio, I expected to be surrounded by people who cared deeply about music. In reality, only a small number did, mostly those whose jobs required it. For everyone else, music was background. It filled space while attention was directed elsewhere. That was not a failure of taste or anything else. It was simply a reminder that cultural production and cultural consumption operate on very different terms. Even people with very close proximity to creative output in their daily lives don’t necessarily really care all that much about creativity itself.

Methods of expression will change, as they always have, but creativity is rooted in meaning, not processes or systems. People do not like empty work for long. When the novelty wears off, new tools are absorbed into the broader creative landscape and judged by the same standards as everything else. What matters is not how something was made, but whether anyone cares about the result.

Of course the passage of time will bring about gradual but relentless improvements in how all AI systems operate, improving how they respond to prompts, and improving outputs. This will have widespread and profound effects. Over time, it will be easier to include AI-generated elements in genuinely human creations. It will become harder to spot AI fakes as things become more sophisticated. But this won’t erode creativity itself, and the essentially human role in it.

Where things become more complicated

Where the picture becomes more complex is around influence, control and agency. This applies to deep cultural power and economic leverage, not just technical control.

Generative AI systems rely on existing material, and the rights in that material are important. Some rights are purely economic, but others are personal. Monetary payments can address some of the problems, but not all. There is a meaningful difference between drawing inspiration from a tradition and extracting value from it without consent. When systems are trained on creative work without transparency or permission, the relationship becomes parasitic rather than collaborative.

This becomes especially clear when generative tools move beyond abstract patterns or novelties, and into recognisable people, performances and narratives.

Over Christmas I stumbled across what looked like a trailer for a sequel to the film The Holiday. You can check it out here: https://youtu.be/ouE1bMTzWQU?si=29SvLxPhbPLaxF-A

At first glance it was very convincing, and it took a little while to realise that it was an AI fake. It reused familiar actors, visual language and emotional cues in a way that felt at first glance, natural and what one would expect. After a minute or so the ‘uncanny valley’ effect started. You could tell that while it all looked very close to natural, it wasn’t exactly natural. There was something a bit odd about the skin tones, looking a bit too perfect and shiny. Some of the lighting didn’t quite match the context. A few age lines looked a bit too cartoon-like, and there was almost too much detail in the hair. The voiceover was, in retrospect, a bit of a giveaway.

Interestingly enough it was my ten year old daughter who instantly spotted it as “AI”. This is apparently fairly common – Rick Beato has mentioned that his kids can spot AI-generated music almost instantly, while it takes him a bit longer. It certainly took me a little while to pick up on this Holiday 2 trailer being an AI fake. Perhaps I was taken in by naivety, or just giving it the benefit of the doubt on the assumption that it was genuine – either way I was still duped.

But the video title and description made it very clear this was a deliberate AI creation. It was framed as a fan tribute, a sort of “what if” that someone has made on their own with presumably no input from any of the original participants and stakeholders in the original film.

Sure there are questions about rights infringements. But what’s going on in the wider context is arguably more significant. If an individual can create something like this, what can a corporation do? Who gets to decide what is generated, and under what constraints?

Control matters more than novelty

This is where questions of control matter more than questions of novelty. We have very little visibility into the data that large language and generative AI models are trained on. We don’t know how that data is curated, or how prompts are transformed into outputs.

We do know that these systems hallucinate, making up (often very convincing) outputs which are nothing more than a garbled mixture of what the system happens to have picked up in the training data, rearranged and spat out. Sometimes these systems simply forget or miss things out, which can be very hard to spot.

We know that they are extremely good at pattern recognition and extremely poor at judgement. That is why they excel at guidance and structure, but create material that is by definition generic and for the most part creatively and artistically unsatisfying. It is also why the motivations of those who build and deploy these systems matter far more than the tools themselves.

George Orwell grasped something close to this long before any of this technology existed. In 1984 he imagined popular songs composed automatically by a machine called a “versificator”, producing endless output designed to be consumed without thought:

“…these songs were composed without any human intervention whatever on an instrument known as a versificator […] The woman sang so tunefully as to turn the dreadful rubbish into an almost pleasant sound.”

This is extremely prescient not only in that automatic music was foreseen, but that it would be generic and poor quality – what we now call “AI slop”. But the really unsettling part is that he understood its cultural and political potential. It was not meant to inspire or endure, and it certainly wasn’t meant to be “art” in any sense of human expression. It was meant to be sound to fill the air. Melodies allow the carrying of lyrics, allowing the political purpose to be served: relatively pleasing tunes could be used to spread the sinister message of the Party. Without human creative intervention, it was “dreadful rubbish”, tolerable only because the original material it mimics was originally of some human artistic merit.

Intention and control were the dominant forces at play in 1984. Intention and control are exactly the areas we need to be very wary of with AI today.

What will feel the pressure, and what will endure

Generative AI is not the end of creativity because creativity does not disappear when tools change. What disappears, if anything, is attention. When outputs become effortless, they become easier to ignore. Meaning still requires engagement, and engagement still requires investment, whether of time, judgement, effort or care.

There are, however, parts of the creative economy that will feel pressure. They may not disappear altogether, but they will change. Ever since the Industrial Revolution, activities based largely on mechanical process or procedure will probably be done quicker, better and cheaper by machines than people.

Certain forms of music, video or other production that rely on technical execution without personal interaction are vulnerable. Some aspects of sound engineering, sample libraries and generic background music services will be challenged, as will certain video recording and editing services. In today’s world, anything ‘creative’ which is intended to function as background context rather than seeking its own identity and audience will be easy to replace if there’s no real need for identity, relationship or communication.

What will not change is the human nature of creative collaboration. This applies in a commercial context just as much as it does to the creative process. Film and television commissioners, game developers and producers are specialists in their own disciplines. They are not music composers or performers, and they do not want to be. Likewise composers and music producers will need video and other creative services, and will turn to specialists in those fields to get things done to the professional standards they need.

Anyone who wants quality, judgement and accountability will continue to work with expert people rather than interfaces. Creativity does not exist in isolation. It lives in conversations, revisions, disagreements and shared understanding. No technical system, AI or otherwise, replaces that.

Between alarmism and complacency

This is why it’s important to strike a balance between alarmism and complacency. There is no need to panic about threats to creativity itself. There is, however, a need to remain literate and alert. Tools that shape culture also shape incentives, and incentives shape behaviour. Awareness is what preserves agency. It gives you the perspective to see things evolving.

I’m writing this in early 2026, at a moment when generative AI still feels new enough to provoke extreme reactions. It would be sensible to return to these questions at the end of the year and see what has changed. My suspicion is that some of the noise will have faded. A few tools will have found their place. Some high level partnerships and collaborations will have happened to bed things in further. Other systems will have been left by the wayside, overtaken. Creativity will still be here, looking very much as it always has, shaped by human effort, meaning and judgement.

People will always want something new, and something that speaks to them in their world as individuals in their contemporary terms. This is the backbone of culture and its spinoffs, media and entertainment very much included. Creativity and its associated industries will adapt. The small group of people who actually care about making things will carry on doing so, using whatever tools help them do that work better, and to keep up the conversations with listeners, audiences, viewers, readers and everyone else. That has always been the pattern. Things will change, and for many there will be disruption ahead, but I genuinely don’t think that creativity and expression are under any sort of threat.

Final thoughts

What we absolutely must be aware of is what’s happening behind the scenes: who’s calling the shots with AI in all its forms, and how can we avoid being caught out or manipulated by AI-generated products.

The Roman Empire grew stronger by absorbing and assimilating external cultures, languages and practices. It was complicated, messy, and there were (rather obvious) winners and losers. Human creativity has done exactly the same, adapting and surviving all manner of changes and potential threats over long stretches of history. Creativity itself isn’t under threat by what’s going on with AI. It will absorb, adapt and evolve.

However just like the Roman Empire, geopolitical forces will dictate where this is heading in the longer term. Culture, power and politics always have been, and will remain, in constant tension. As governments struggle to compete with technology corporations for influence over society it’s anyone’s guess where this will end up.

What is certain is that every one of us will be affected in some way as a result, which makes vigilance, critical thinking and cultural literacy more important than ever.